Struggling with a Capture The Flag event? Say no more, I've got just the solution for you. Send the admins to your website and leak all their flags!

This would have been possible in CTFd versions < 3.7.2 due to an XS-Leak vulnerability. This post describes the process of finding and exploiting this vulnerability with all the technical details. To quickly see the impact, check out this demo video.

Discovery

I've always been a fan of client-side web exploits, and some weeks ago I wanted to experiment more with XS-Leaks. This vulnerability takes advantage of side-channels in the browser to infer information about a cross-site response. This means that one website like target.com, could have some sensitive data returned in its response when you are authenticated. Another website like attacker.com should not be able to read this sensitive data in the response. The Same-Origin Policy (SOP) prevents this normally.

With XS-Leaks, we still cannot look at the content of a response but we see its side-effects and other bits that are exposed cross-site. One simple example is Frame Counting where a window reference to another site has a .length property that any other page can access. This tells the attacker how many iframes are inside of the response. While it may not sound very exciting yet, behaviours like this can be very well exploited using XS-Search. This requires a search functionality on the target website where the results generate different detectable side-channels. The attacker can then search character-by-character for a string until their XS-Leak gets triggered, marking an abnormal result, maybe a search result. They can then add this to the searching prefix and continue with the next character, eventually leaking whole strings.

Now that you know slightly what to look for and the big impact it can have, we will explore a popular Capture The Flag platform named "CTFd". I chose this target because I had not heard of vulnerabilities in it before, so I thought it might not have been extensively tested, especially for these relatively new XS-Leak techniques. You can set it up locally or play around publicly on the demo.ctfd.io website.

&&

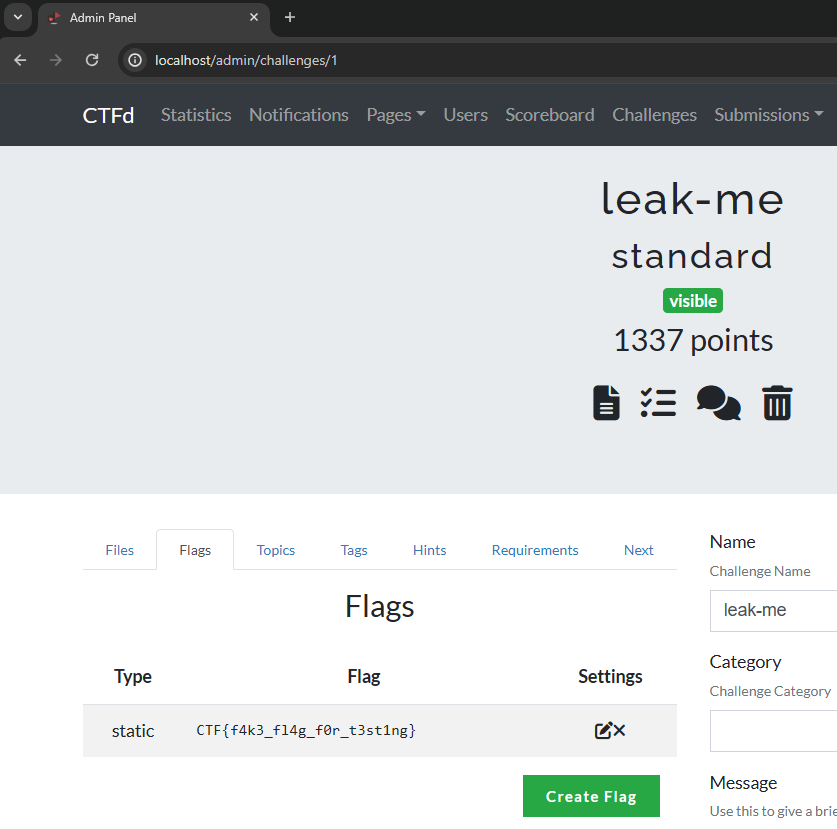

One of the crown jewels of CTFd instances is the flags. These are supposed to be kept secret at all times and are the required proof for solving a challenge. We should target functionalities involving flags to reach a high impact. The first may be viewing challenge flags directly inside the challenge editor:

In the background, a request to /api/v1/flags/1 fetches the flags for this challenge. While it looks interesting, there doesn't seem to be an obvious search function for flags here. The table of challenges does have a search bar, but this doesn't search for flag contents, only the Name, ID, Category and Type. This functionality does not seem vulnerable.

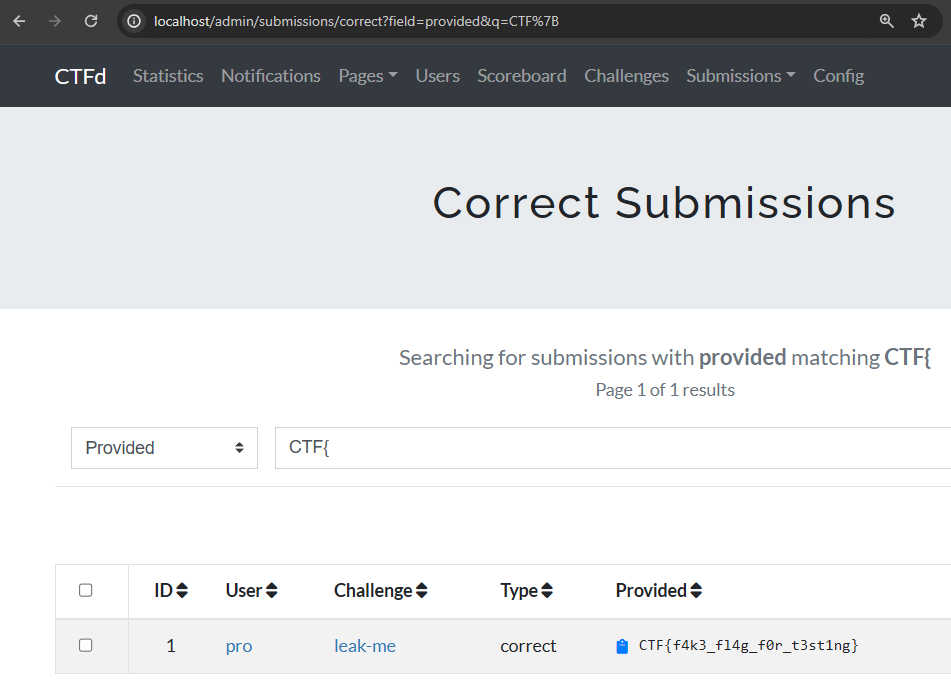

Next up, we can also find flags in a management feature called Submissions. Here, administrators can search for correct and incorrect flag submissions to spot common mistakes or abuse. "Searching for correct flags", that sounds interesting.

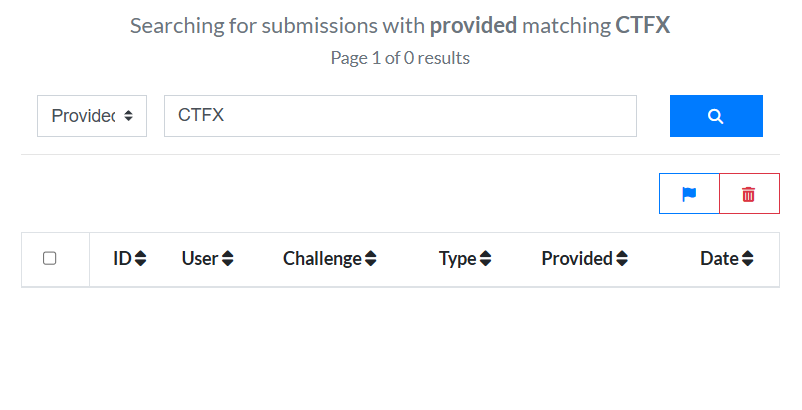

After any user has solved a challenge, their submission ends up in this table. We can search it with the &q= query parameter filtering for ?field=provided. This sounds like a good candidate for XS-Search. But can we differentiate the response? A non-matching query returns an empty table:

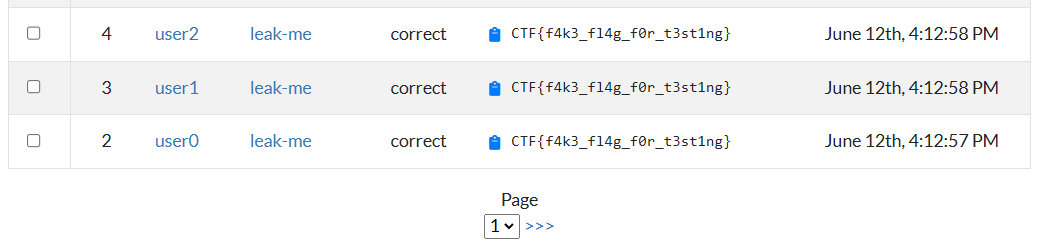

There are no iframes on either response, so we cannot use Frame Counting. Other techniques also seem difficult with the only change being an extra <td> item. We would ideally get some special '404 Not Found' page for when a query doesn't match, which potentially contains larger changes. Results start to get more interesting when we add 50+ correct submissions, however. At this point, the table becomes too large and we can only view it in paginated chunks:

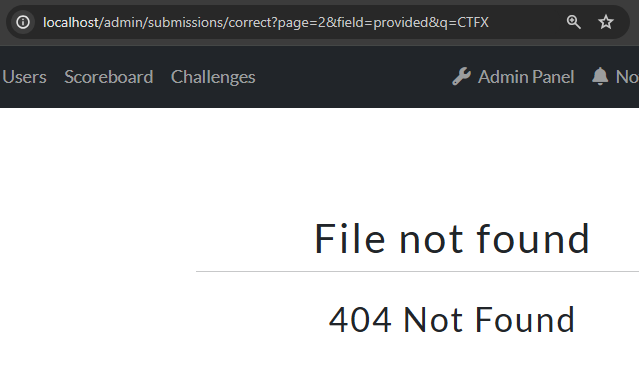

The 2nd page is fetched using ?page=2 in the address bar. Something interesting happens when we change the &q= parameter now, though:

This is suddenly a 404 response, while previously on page 1 it was an empty table! This seems like an even better candidate for XS-Search because we may be able to get a leak off of this. While the HTML differs quite a lot, even with different scripts and fonts being loaded, there are still no iframes in either response. We can however notice that a matching 2nd page returns the 200 OK status code, while the non-matching response is a 404 Not Found error:

HTTP/1.1 HTTP/1.1 There is a whole section on the XS-Leaks Wiki about "Error Events" that detect if a request succeeded (200) or failed (404). This looks very promising using a <script> tag and an onerror/onload handler to find whether it loaded successfully or not. Unfortunately, this attack doesn't work anymore for two reasons. Firstly, the response needs to be valid JavaScript which our HTML-responses are not. This causes the error event to fire in both cases. Secondly, the requests both end up at /login after a 302 redirect. Our requests are not authenticated cross-site.

While cookies were always being sent in the early days of the web, in modern times, there are protections like SameSite that prevent cookies from being sent in these background requests (third-party contexts). CTFd uses SameSite=Lax cookies by default, meaning they will only be sent if the address bar matches the request's origin (top-level context). We can use APIs like window.open() to open the URL in a top-level context, which will correctly send the authentication cookies, but won't allow us to probe for error or load events. Many of the common XS-Leak techniques are prevented by Lax cookies.

After searching around for a while and experimenting, I eventually stumbled upon this interesting behaviour: In Chromium, 200 responses are saved to the browser's history, but 404 responses are not. This is an interesting difference that means a matching query will be saved in the history after visiting it in a top-level context, while a non-matching query won't be. Although, cannot simply leak the history from our attacker's site, right? Right?!?

Leaking history

The XS-Leaks Wiki briefly mentions this in "CSS Tricks".

Using the CSS

:visitedselector, it’s possible to apply a different style for URLs that have been visited.

You might think this would trivially allow extracting the colour from the link or adding a background: url(...) style to trigger an exfiltration request. You can try it, but this won't work on modern browsers. The "Privacy and the :visited selector" article explains why there are now very restricted rules on what styles can be applied to this selector and that some APIs will lie and return colours as if this selector wasn't applied at all, preventing the attacker from being able to leak the history without interaction. But remember, the user can still see a different colour, even though the window it is displayed on cannot detect it. This still allows attacks that require a user to follow simple instructions that leak the colour of an element:

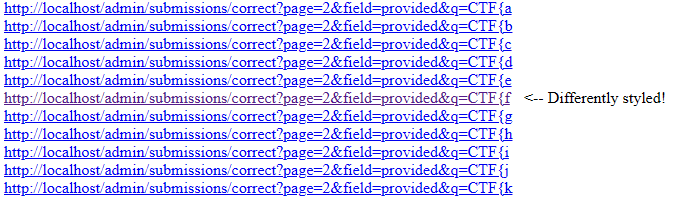

The page above presents itself as a captcha, containing white and black squares that should manually be clicked. These clicks are logged by the website, revealing what elements had :visited styles applied, and thus what URLs were in the user's history. We can re-use this idea for our case, placing all possible URLs in HTML with some clever styling to make the user click a differently-coloured one:

We need to make sure all possible matches are visited in a top-level context to be attempted to be stored in the browser's history. We could use window.open() for this, but doing so requires a user interaction for every popup. Instead, we can open a popup window once, and then use .location = on it multiple times without user interaction to change the loaded URL in the existing popup. This way we can:

- Visit every

/submissionsURL one by one to save the 200 OK response in the history - Display all the URLs with special

:visitedstyling - Ask the user to click the uniquely coloured one!

Proof of Concept

The idea above was implemented in the following gist, with a demo video of it below:

https://gist.github.com/JorianWoltjer/8b61dcac51ede1e5bfe6e3f6527662c9

Note: Underscores (

_) were slightly special because they match any character in the query. When no black boxes are shown we know it must have been the underscore (skip button).

It creates boxes coloured by their <a> tags just like the captcha example, but only one link will be visited. Before adding the anchor to the HTML, we visit the URL in a top-level context by changing the popup's .location attribute. The 200 vs 404 response here will decide if the link is coloured or not, which the user will tell the application by clicking on it. This repeats until the whole flag is found!

Improvement using Padding

The above works, and is definitely worth a report, but the requirement of 51 solves on a challenge before being able to leak a flag bugged me. At that point, you should just solve it yourself. I got thinking if there would be any way to reduce the number of correct submissions required.

The reason is that a 404 only happens after the 2nd page and pages have 50 rows. We were looking at the /admin/submissions/correct endpoint because this ensures that a leaked flag is correct. We could in theory also leak /admin/submissions/incorrect flags but this does not seem useful. There is also the generic /admin/submissions endpoint that shows any submissions, correct or incorrect. This would show some flags we want to leak but also all incorrect submissions.

We can influence the submissions by submitting wrong flags. We can abuse this by inserting our own wrong submissions that always match the queries, resulting in every request already having some 'padding'.

If we submit 50 wrong flags that match the current query, the submissions page for the XS-Leak will contain these 50 at least. If there was a correct flag also submitted matching the query, the table will overflow to 51 rows and create a 2nd page (200). Otherwise, the 2nd page will still be an error (404). This requires us to submit 50 wrong flags for every test we do. While possible, it makes the attack much slower and heavier on the server. The steps for doing so would be as follows:

- Create a query like

CTF{a - Submit 'CTF{a' 50 times to the CTFd instance spread over different accounts

- Change the window location to

?q=CTF{ato save a 200 or 404 in history - Add

<a href=...>pointing to the visited window location - Continue at step 1 with the next query (eg.

CTF{b)

There is a cleaner solution, because our wrong submissions only have to match the query. This allows us to be more flexible because there may be any prefixes or suffixes around the flag and it would still match. If we would concatenate all possible flag prefixes together and send them all at once, we only need 50 wrong submissions at the start of each round, not in between each query. This significantly reduces the number of requests we need to make, from 2000 to only 50 per character. The steps would now look like this:

- Submit a string that matches all queries this round, like 'CTF{a,CTF{b,CTF{c...'

- Visit every

/submissionsURL one by one to save the 200 OK response in the history - Display all the URLs with special

:visitedstyling

This idea reduces the required number of solves to 1 because we need a correct submission to match against. Due to padding, the 1st page is always filled, forcing any correct submission to the 2nd page. This idea is implemented in the following gist, with a demo video of it below:

https://gist.github.com/JorianWoltjer/295ac5d8e7595a073116eba6f091506e

Note: We use a helper Flask server here that has 50 logged-in accounts, and can submit the padding flags quickly.

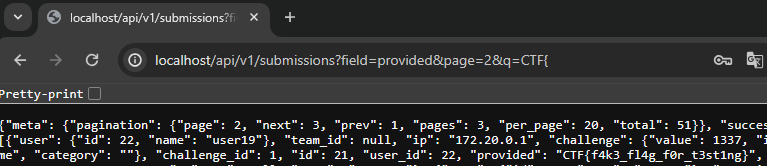

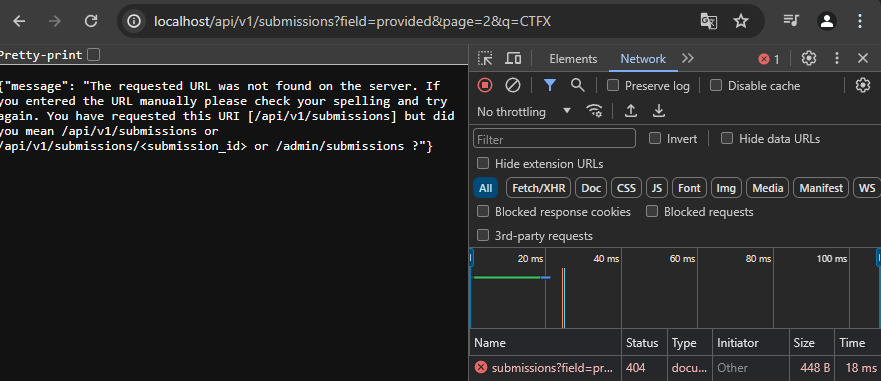

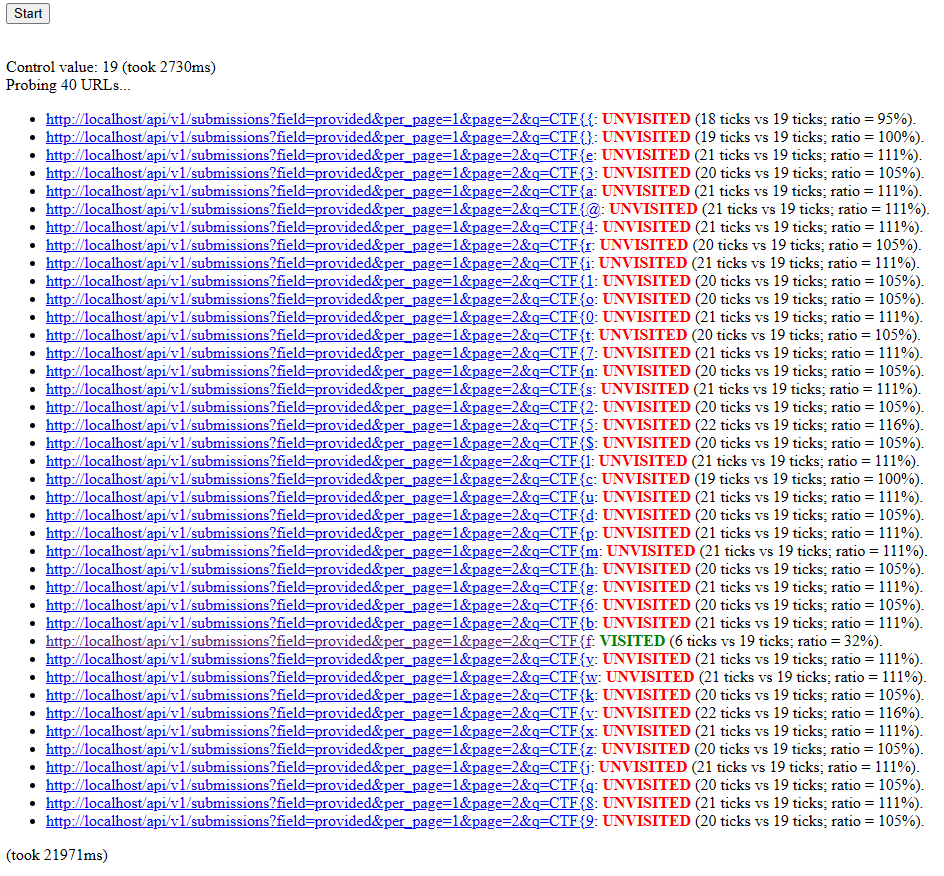

The attack is already getting better, but we're not done yet. Looking at the CTFd API, we can find a similar parameter q for fetching submissions. Would it be vulnerable in the same way? Let's pass it the same parameters as the regular HTML page:

Nice! The 'CTF{' query has a 200 response, while 'CTFX' gives a 404. It still only works on the 2nd page, but this API response shows something interesting in its JSON:

"per_page": 20

Every page shows only 20 rows instead of 50, an improvement already. Can we alter this value?

http://localhost/api/v1/submissions?field=provided&page=2&per_page=1&q=CTF{

We sure can! adding &per_page=1 as a parameter, the response contains only 1 row. Because we still need the 2nd page, we only need 1 wrong submission as padding to get it there, which is way better than the 50 or 20 we had before. We still only need 1 correct submission to find a difference in the response.

Implementing this is very similar to the previous, only changing the endpoint to /api/v1/submissions, adding &per_page=1, and sending 1 padding flag using the helper.

Fully Automatic

One thing that was still bothering me, was the amount of interaction required. The victim of this attack needs to be convinced to play your game and follow instructions carefully. This needs to be done well to avoid suspicion. The reason we need so much interaction is because the user's history should not be able to be leaked by any random site, this would be an invasion of privacy. We already looked at the :visited privacy considerations browsers put in place to prevent automated exfiltration of the change in style.

There have in the past been clever exploits that bypass these rules causing the rules to become more strict, but would there be any unfixed exploits left? One recent (2018) bug reported to Chromium is the following:

https://issues.chromium.org/issues/40091173

This issue involves applying a heavy style on the :visited selector and measuring re-paint timing by quickly swapping the href= attribute. A visited link would take longer to render than a non-visited one. With enough complicated characters, it would be a significant enough difference to be measured with requestAnimationFrame() executions. They even provide an attack.html reproduction case! The discussion moved to another unfixed issue, which potentially still works. Let's try it:

I honestly did not expect that to work, it works perfectly! Not found pages are blue, and detected as 'unvisited', while purple visited links are detected as 'visited'. It takes half a second for each detection, but I am willing to pay that price for a fully automatic solution.

The source code isn't the prettiest, so I made some improvements that allow it to be repeated. Here is an example that leaks the history to find a matching CTFd flag character:

In our exploit, we need to do this as fast as possible, so we can even run this parallel to visiting the URLs in the popup window. Visiting already required a slight wait for the page to load, and at the same time, we will wait for the CSS to render. Doing these simultaneously makes the wait barely any longer. It requires a single interaction to open the popup, after which the whole process can happen in the background while the user reads the attacker's page, for example.

This idea is implemented in the following gist, with a demo video of it below:

https://gist.github.com/JorianWoltjer/be36554d07a918ae3a811aa96ab74bd8

Conclusion

This method of leaking history to differentiate 200 and 404 responses seems to be a new XS-Leak technique that works with SameSite=Lax cookies, which are becoming more common across the web. I'm sure this kind of vulnerability can be found elsewhere on other targets.

CTFd has fixed this issue in v3.7.2 with the following code change (c8df400):

submissions = (

Submissions.query.filter_by(**filters_by)

.filter(*filters)

.join(Challenges)

.join(Model)

.order_by(Submissions.date.desc())

- .paginate(page=page, per_page=50)

+ .paginate(page=page, per_page=50, error_out=False)

)

This error_out option is documented in SQLAlchemy, and by default, aborts with a 404 error when no items are returned and the page is not the first. By setting this to False, it prevents this aborting and shows an empty table instead that is not detectable by XS-Leaks.

To ensure this kind of vulnerability never happens again on CTFd, another commit (c6a8ce0) adds the Cross-Origin-Opener-Policy header which blocks getting a window reference to the site. This prevents some DOM leaks like Frame Counting which abuses the window reference, and our attack because the .location property cannot be changed after this header is seen. The window acts as a closed window. Therefore, we cannot visit all XS-Search URLs in an automated way, requiring a new user interaction for every new popup we open. Together with SameSite=Lax cookies, this is a great defence against XS-Leaks.

CTFd were very pleasant to work with, the timeline of reporting was as follows:

- March 3rd 2024: Initial report sent

- March 4th 2024: CTFd looks at the report

- March 10th 2024: I update that the API is also vulnerable

- March 13th 2024: CTFd finishes the investigation and asks some follow-up questions

- March 14th 2024: CTFd fixes the issue

- March 19th 2024: CTFd version 3.7.2 release